Integrations > Tallyfy Analytics

How Tallyfy Analytics works

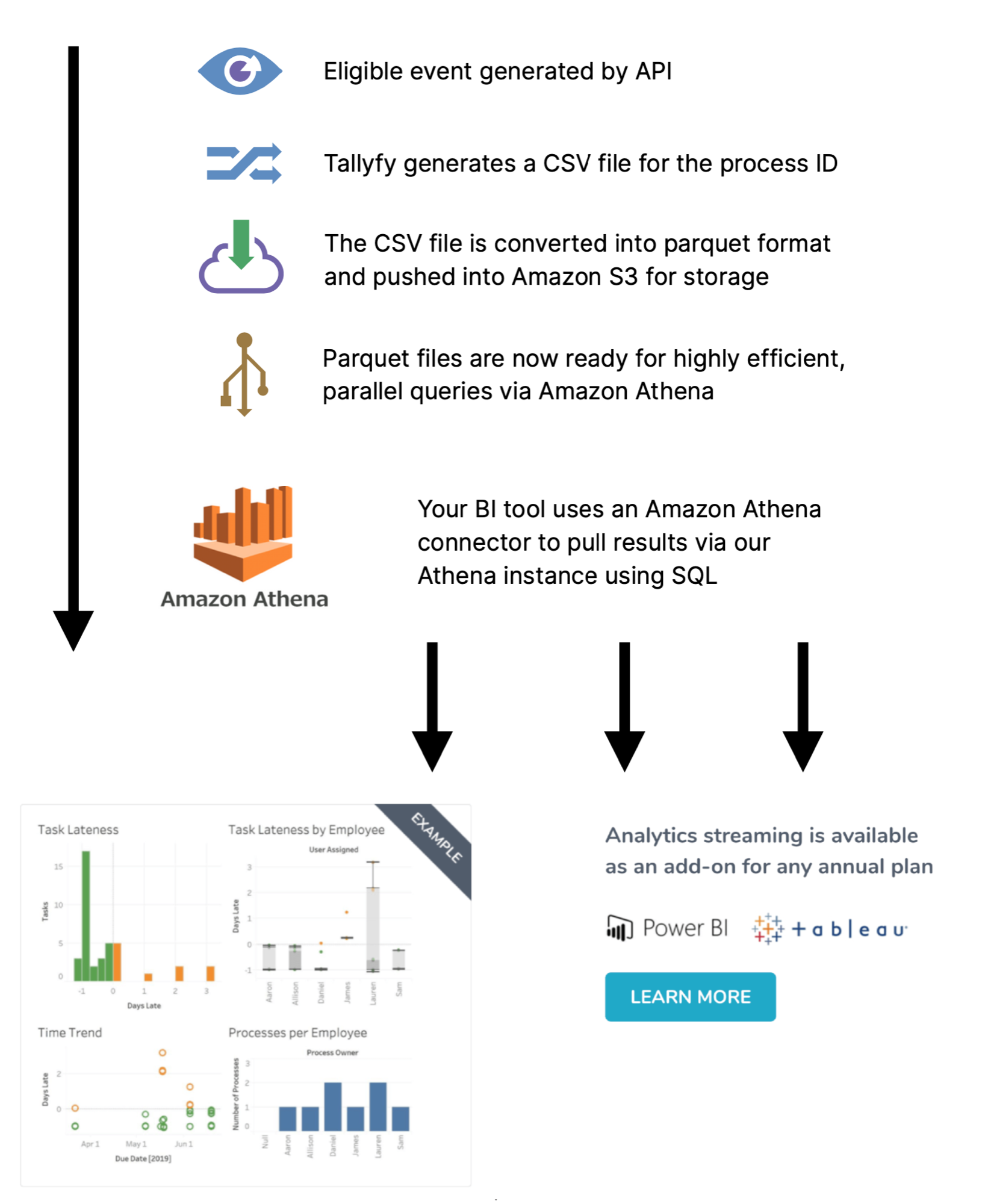

Tallyfy Analytics transforms your workflow data into a format that BI tools like Power BI and Tableau can query directly. Here’s how your data travels from Tallyfy to those tools.

Your data goes through five steps to become analytics-ready:

- Detecting an event - Tallyfy watches for changes like task completions, process status updates, and user changes.

- Extracting the data - A complete snapshot of the process where the event occurred gets captured.

- Converting the format - Data is converted to Apache Parquet1 for fast, compressed analysis.

- Storing securely - Everything lands in your private Amazon S3 storage bucket.

- Providing access - You receive AWS credentials to connect your BI tools.

Tasks get completed. Statuses change. Forms get submitted. Processes are created, archived, or restored. When these actions happen in Tallyfy, the system queues them for analytics processing.

Each queued event triggers a full process snapshot. The export captures:

- Process metadata (owner, status, template name/version, tags, completion timestamps)

- Every task’s status, assignees, and due dates

- Form field questions and answers

- User and guest assignments (including group-based assignments)

- Comments and reported issues

This data is initially saved as a CSV file.

CSV alone won’t cut it for serious analytics. Tallyfy automatically converts it to Apache Parquet format using snappy compression, which:

- Queries much faster than raw CSV files

- Takes up significantly less storage space

- Works natively with Power BI, Tableau, and other BI tools

Your Parquet files land in a dedicated S3 bucket:

- Each organization gets a private folder - no other accounts can access it

- AWS encryption at rest protects stored data

- Both the Parquet files and original CSVs are stored for redundancy

- Files stay available throughout your Tallyfy Analytics subscription

Tallyfy provides you with AWS IAM credentials that let your BI tools connect. With these credentials, you can:

- Query your data through Amazon Athena2 without managing any database infrastructure

- Run SQL queries against your process data

- Use standard JDBC/ODBC connections (supported by virtually every BI tool)

- Build dashboards and reports on top of the queried data

This diagram shows the complete data flow:

A few things to know before you start:

- Data processing begins the moment Tallyfy Analytics is activated on your account - not before

- You’ll receive your AWS IAM credentials right after activation

- Most BI tools can be connected in about 15 minutes using standard JDBC/ODBC drivers

- Need data stored in a specific region or format? Contact Tallyfy support first

Tableau > Connecting Tableau to analytics data

Was this helpful?

- 2025 Tallyfy, Inc.

- Privacy Policy

- Terms of Use

- Report Issue

- Trademarks